Last week I was at the 2012 AGU Fall Meeting. I plan to blog about many of the talks, but let me start with the Tyndall lecture given by Ray Pierrehumbert, on “Successful Predictions”. You can see the whole talk on youtube, so here I’ll try and give a shorter summary.

Ray’s talk spanned 120 years of research on climate change. The key message is that science is a long, slow process of discovery, in which theories (and their predictions) tend to emerge long before they can be tested. We often learn just as much from the predictions that turned out to be wrong as we do from those that were right. But successful predictions eventually form the body of knowledge that we can be sure about, not just because they were successful, but because they build up into a coherent explanation of multiple lines of evidence.

Here are the sucessful predictions:

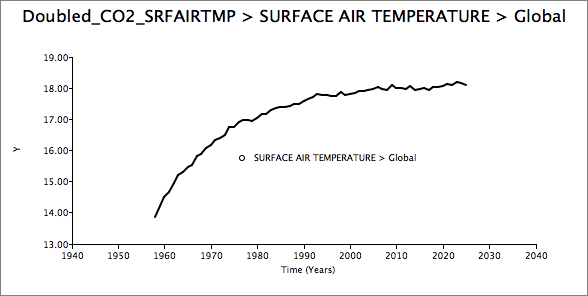

1896: Svante Arrhenius correctly predicts that increases in fossil fuel emissions would cause the earth to warm. At that time, much of the theory of how atmospheric heat transfer works was missing, but nevertheless, he got a lot of the process right. He was right that surface temperature is determined by the balance between incoming solar energy and outgoing infrared radiation, and that the balance that matters is the radiation budget at the top of the atmosphere. He knew that the absorption of infrared radiation was due to CO2 and water vapour, and he also knew that CO2 is a forcing while water vapour is a feedback. He understood the logarithmic relationship between CO2 concentrations in the atmosphere and surface temperature. However, he got a few things wrong too. His attempt to quantify the enhanced greenhouse effect was incorrect, because he worked with a 1-layer model of the atmosphere, which cannot capture the competition between water vapour and CO2, and doesn’t account for the role of convection in determining air temperatures. His calculations were incorrect because he had the wrong absorption characteristics of greenhouse gases. And he thought the problem would be centuries away, because he didn’t imagine an exponential growth in use of fossil fuels.

Arrhenius, as we now know, was way ahead of his time. Nobody really considered his work again for nearly 50 years, a period we might think of as the dark ages of climate science. The story perfectly illustrates Paul Hoffman’s tongue-in-cheek depiction of how scientific discoveries work: someone formulates the theory, other scientists then reject it, ignore it for years, eventually rediscover it, and finally accept it. These “dark ages” weren’t really dark, of course – much good work was done in this period. For example:

- 1900: Frank Very worked out the radiation balance, and hence the temperature, of the moon. His results were confirmed by Pettit and Nicholson in 1930.

- 1902-14: Arthur Schuster and Karl Schwarzschild used a 2-layer radiative-convective model to explain the structure of the sun.

- 1907: Robert Emden realized that a similar radiative-convective model could be applied to planets, and Gerard Kuiper and others applied this to astronomical observations of planetary atmospheres.

This work established the standard radiative-convective model of atmospheric heat transfer. This treats the atmosphere as two layers; in the lower layer, convection is the main heat transport, while in the upper layer, it is radiation. A planet’s outgoing radiation comes from this upper layer. However, up until the early 1930’s, there was no discussion in the literature of the role of carbon dioxide, despite occasional discussion of climate cycles. In 1928, George Simpson published a memoir on atmospheric radiation, which assumed water vapour was the only greenhouse gas, even though, as Richardson pointed out in a comment, there was evidence that even dry air absorbed infrared radiation.

1938: Guy Callendar is the first to link observed rises in CO2 concentrations with observed rises in surface temperatures. But Callendar failed to revive interest in Arrhenius’s work, and made a number of mistakes in things that Arrhenius had gotten right. Callendar’s calculations focused on the radiation balance at the surface, whereas Arrhenius had (correctly) focussed on the balance at the top of the atmosphere. Also, he neglected convective processes, which astrophysicists had already resolved using the radiative-convective model. In the end, Callendar’s work was ignored for another two decades.

1956: Gilbert Plass correctly predicts a depletion of outgoing radiation in the 15 micron band, due to CO2 absorption. This depletion was eventually confirmed by satellite measurements. Plass was one of the first to revisit Arrhenius’s work since Callendar, however his calculations of climate sensitivity to CO2 were also wrong, because, like Callendar, he focussed on the surface radiation budget, rather than the top of the atmosphere.

1961-2: Carl Sagan correctly predicts very thick greenhouse gases in the atmosphere of Venus, as the only way to explain the very high observed temperatures. His calculations showed that greenhouse gasses must absorb around 99.5% of the outgoing surface radiation. The composition of Venus’s atmosphere was confirmed by NASA’s Venus probes in 1967-70.

1959: Burt Bolin and Erik Eriksson correctly predict the exponential increase in CO2 concentrations in the atmosphere as a result of rising fossil fuel use. At that time they did not have good data for atmospheric concentrations prior to 1958, hence their hindcast back to 1900 was wrong, but despite this, their projection for changes forward to 2000 were remarkably good.

1967: Suki Manabe and Dick Wetherald correctly predict that warming in the lower atmosphere would be accompanied by stratospheric cooling. They had built the first completely correct radiative-convective implementation of the standard model applied to Earth, and used it to calculate a +2C equilibrium warming for doubling CO2, including the water vapour feedback, assuming constant relative humidity. The stratospheric cooling was confirmed in 2011 by Gillett et al.

1975: Suki Manabe and Dick Wetherald correctly predict that the surface warming would be much greater in the polar regions, and that there would be some upper troposphere amplification in the tropics. This was the first coupled general circulation model (GCM), with an idealized geography. This model computed changes in humidity, rather than assuming it, as had been the case in earlier models. It showed polar amplification, and some vertical amplification in the tropics. The polar amplification was measured, and confirmed by Serreze et al in 2009. However, the height gradient in the tropics hasn’t yet been confirmed (nor has it yet been falsified – see Thorne 2008 for an analysis)

1989: Ron Stouffer et. al. correctly predict that the land surface will warm more than the ocean surface, and that the southern ocean warming would be temporarily suppressed due to the slower ocean heat uptake. These predictions are correct, although these models failed to predict the strong warming we’ve seen over the antarctic peninsula.

Of course, scientists often get it wrong:

1900: Knut Angström incorrectly predicts that increasing levels of CO2 would have no effect on climate, because he thought the effect was already saturated. His laboratory experiments weren’t accurate enough to detect the actual absorption properties, and even if they were, the vertical structure of the atmosphere would still allow the greenhouse effect to grow as CO2 is added.

1971: Rasool and Schneider incorrectly predict that atmospheric cooling due to aerosols would outweigh the warming from CO2. However, their model had some important weaknesses, and was shown to be wrong by 1975. Rasool and Schneider fixed their model and moved on. Good scientists acknowledge their mistakes.

1993: Richard Lindzen incorrectly predicts that warming will dry the troposphere, according to his theory that a negative water vapour feedback keeps climate sensitivity to CO2 really low. Lindzen’s work attempted to resolve a long standing conundrum in climate science. In 1981, the CLIMAP project reconstructed temperatures at the last Glacial maximum, and showed very little tropical cooling. This was inconsistent the general circulation models (GCMs), which predicted substantial cooling in the tropics (e.g. see Broccoli & Manabe 1987). So everyone thought the models must be wrong. Lindzen attempted to explain the CLIMAP results via a negative water vapour feedback. But then the CLIMAP results started to unravel, and newer proxies demonstrated that it was the CLIMAP data that was wrong, rather than the models. It eventually turns out the models were getting it right, and it was the CLIMAP data and Lindzen’s theories that were wrong. Unfortunately, bad scientists don’t acknowledge their mistakes; Lindzen keeps inventing ever more arcane theories to avoid admitting he was wrong.

1995: John Christy and Roy Spencer incorrectly calculate that the lower troposphere is cooling, rather than warming. Again, this turned out to be wrong, once errors in satellite data were corrected.

In science, it’s okay to be wrong, because exploring why something is wrong usually advances the science. But sometimes, theories are published that are so bad, they are not even wrong:

2007: Courtillot et. al. predicted a connection between cosmic rays and climate change. But they couldn’t even get the sign of the effect consistent across the paper. You can’t falsify a theory that’s incoherent! Scientists label this kind of thing as “Not even wrong”.

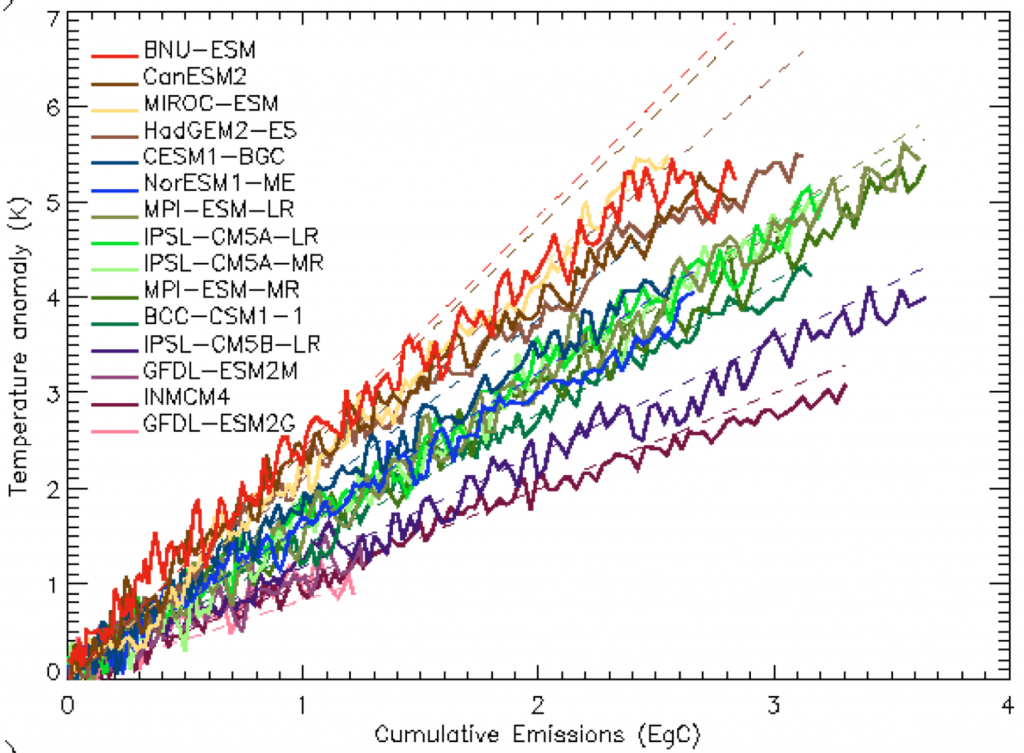

Finally, there are, of course, some things that scientists didn’t predict. The most important of these is probably the multi-decadal fluctuations in the warming signal. If you calculate the radiative effect of all greenhouse gases, and the delay due to ocean heating, you still can’t reproduce the flat period in the temperature trend in that was observed in 1950-1970. While this wasn’t predicted, we ought to be able to explain it after the fact. Currently, there are two competing explanations. The first is that the ocean heat uptake itself has decadal fluctuations, although models don’t show this. However, it’s possible that climate sensitivity is at the low end of the likely range (say 2°C per doubling of CO2), it’s possible we’re seeing a decadal fluctuation around a warming signal. The other explanation is that aerosols took some of the warming away from GHGs. This explanation requires a higher value for climate sensitivity (say around 3°C), but with a significant fraction of the warming counteracted by an aerosol cooling effect. If this explanation is correct, it’s a much more frightening world, because it implies much greater warming as CO2 levels continue to increase. The truth is probably somewhere between these two. (See Armour & Roe, 2011 for a discussion)

To conclude, climate scientist have made many predictions about the effect of increasing greenhouse gases that have proven to be correct. They have earned a right to be listened to, but is anyone actually listening? If we fail to act upon the science, will future archaeologists wade through AGU abstracts and try to figure out what went wrong? There are signs of hope – in his re-election acceptance speech, President Obama revived his pledge to take action, saying “We want our children to live in an America that …isn’t threatened by the destructive power of a warming planet.”