Today I’ve been tracking down the origin of the term “Greenhouse Effect”. The term itself is problematic, because it only works as a weak metaphor: both the atmosphere and a greenhouse let the sun’s rays through, and then trap some of the resulting heat. But the mechanisms are different. A greenhouse stays warm by preventing warm air from escaping. In other words, it blocks convection. The atmosphere keeps the planet warm by preventing (some wavelengths of) infra-red radiation from escaping. The “greenhouse effect” is really the result of many layers of air, each absorbing infra-red from the layer below, and then re-emitting it both up and down. The rate at which the planet then loses heat is determined by the average temperature of the topmost layer of air, where this infra-red finally escapes to space. So not really like a greenhouse at all.

So how did the effect acquire this name? The 19th century French mathematician Joseph Fourier is usually credited as the originator of the idea in the 1820’s. However, it turns out he never used the term, and as James Fleming (1999) points out, most authors writing about the history of the greenhouse effect cite only secondary sources on this, without actually reading any of Fourier’s work. Fourier does mention greenhouses in his 1822 classic “Analytical Theory of Heat”, but not in connection with planetary temperatures. The book was published in French, so he uses the french “les serres”, but it appears only once, in a passage on properties of heat in enclosed spaces. The relevant paragraph translates as:

In general the theorems concerning the heating of air in closed spaces extend to a great variety of problems. It would be useful to revert to them when we wish to foresee and regulate the temperature with precision, as in the case of green-houses, drying-houses, sheep-folds, work-shops, or in many civil establishments, such as hospitals, barracks, places of assembly” [Fourier, 1822; appears on p73 of the edition translated by Alexander Freeman, published 1878, Cambridge University Press]

In his other writings, Fourier did hypothesize that the atmosphere plays a role in slowing the rate of heat loss from the surface of the planet to space, hence keeping the ground warmer than it might otherwise be. However, he never identified a mechanism, as the properties of what we now call greenhouse gases weren’t established until John Tyndall‘s experiments in the 1850’s. In explaining his hypothesis, Fourier refers to a “hotbox”, a device invented by the explorer de Saussure, to measure the intensity of the sun’s rays. The hotbox had several layers of glass in the lid which allowed the sun’s rays to enter, but blocked the escape of the heated air via convection. But it was only a metaphor. Fourier understood that whatever the heat trapping mechanism in the atmosphere was, it didn’t actually block convection.

Svante Arrhenius was the first to attempt a detailed calculation of the effect of changing levels of carbon dioxide in the atmosphere, in 1896, in his quest to test a hypothesis that the ice ages were caused by a drop in CO2. Accordingly, he’s also sometime credited with inventing the term. However, he also didn’t use the term “greenhouse” in his papers, although he did invoke a metaphor similar to Fourier’s, using the Swedish word “drivbänk”, which translates as hotbed (Update: or possibly “hothouse” – see comments).

So the term “greenhouse effect” wasn’t coined until the 20th Century. Several of the papers I’ve come across suggest that the first use of the term “greenhouse” in this connection in print was in 1909, in a paper by Wood. This seems rather implausible though, because the paper in question is really only a brief commentary explaining that the idea of a “greenhouse effect” makes no sense, as a simple experiment shows that greenhouses don’t work by trapping outgoing infra-red radiation. The paper is clearly reacting to something previously published on the greenhouse effect, and which Wood appears to take way too literally.

A little digging produces a 1901 paper by Nils Ekholm, a Swedish meteorologist who was a close colleague of Arrhenius, which does indeed use the term ‘greenhouse’. At first sight, he seems to use the term more literally than is warranted, although in subsequent paragraphs, he explains the key mechanism fairly clearly:

The atmosphere plays a very important part of a double character as to the temperature at the earth’s surface, of which the one was first pointed out by Fourier, the other by Tyndall. Firstly, the atmosphere may act like the glass of a green-house, letting through the light rays of the sun relatively easily, and absorbing a great part of the dark rays emitted from the ground, and it thereby may raise the mean temperature of the earth’s surface. Secondly, the atmosphere acts as a heat store placed between the relatively warm ground and the cold space, and thereby lessens in a high degree the annual, diurnal, and local variations of the temperature.

There are two qualities of the atmosphere that produce these effects. The one is that the temperature of the atmosphere generally decreases with the height above the ground or the sea-level, owing partly to the dynamical heating of descending air currents and the dynamical cooling of ascending ones, as is explained in the mechanical theory of heat. The other is that the atmosphere, absorbing but little of the insolation and the most of the radiation from the ground, receives a considerable part of its heat store from the ground by means of radiation, contact, convection, and conduction, whereas the earth’s surface is heated principally by direct radiation from the sun through the transparent air.

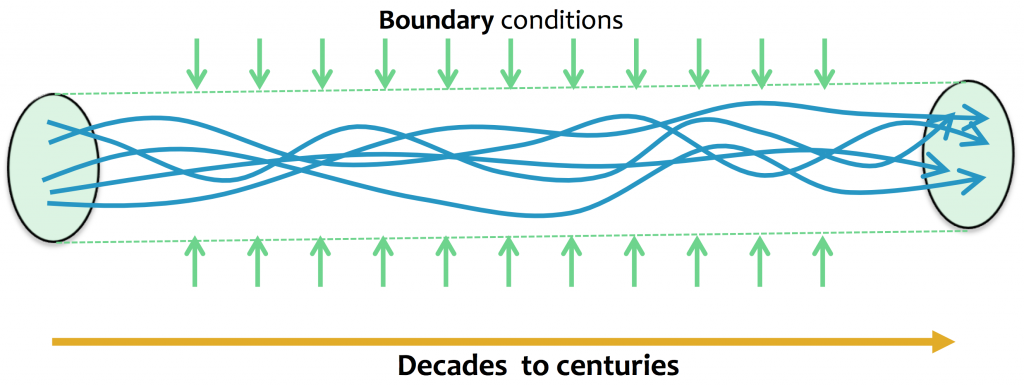

It follows from this that the radiation from the earth into space does not go on directly from the ground, but on the average from a layer of the atmosphere having a considerable height above sea-level. The height of that layer depends on the thermal quality of the atmosphere, and will vary with that quality. The greater is the absorbing power of the air for heat rays emitted from the ground, the higher will that layer be, But the higher the layer, the lower is its temperature relatively to that of the ground ; and as the radiation from the layer into space is the less the lower its temperature is, it follows that the ground will be hotter the higher the radiating layer is.” [Ekholm, 1901, p19-20]

At this point, it’s still not called the “greenhouse effect”, but this metaphor does appear to have become a standard way of introducing the concept. But in 1907, the English scientist, John Henry Poynting confidently introduces the term “greenhouse effect”, in his criticism of Percival Lowell‘s analysis of the temperature of the planets. He uses it in scare quotes throughout the paper, which suggests the term is newly minted:

Prof. Lowell’s paper in the July number of the Philosophical Magazine marks an important advance in the evaluation of planetary temperatures, inasmuch as he takes into account the effect of planetary atmospheres in a much more detailed way than any previous wrlter. But he pays hardly any attention to the “blanketing effect,” or, as I prefer to call it, the “greenhouse effect” of the atmosphere.” [Poynting, 1907, p749]

And he goes on:

The ” greenhouse effect” of the atmosphere may perhaps be understood more easily if we first consider the case of a greenhouse with horizontal roof of extent so large compared with its height above the ground that the effect of the edges may be neglected. Let us suppose that it is exposed to a vertical sun, and that the ground under the glass is “black” or a full absorber. We shall neglect the conduction and convection by the air in the greenhouse. [Poynting, 1907, p750]

He then goes on to explore the mathematics of heat transfer in this idealized greenhouse. Unfortunately, he ignores Ekholm’s crucial observation that it is the rate of heat loss at the upper atmosphere that matters, so his calculations are mostly useless. But his description of the mechanism does appear to have taken hold as the dominant explanation. The following year, Frank Very published a response (in the same journal), using the term “Greenhouse Theory” in the title of the paper. He criticizes Poynting’s idealised greenhouse as way too simplistic, but suggests a slightly better metaphor is a set of greenhouses stacked one above another, each of which traps a little of the heat from the one below:

It is true that Professor Lowell does not consider the greenhouse effect analytically and obviously, but it is nevertheless implicitly contained in his deduction of the heat retained, obtained by the method of day and night averages. The method does not specify whether the heat is lost by radiation or by some more circuitous process; and thus it would not be precise to label the retaining power of the atmosphere a “greenhouse effect” without giving a somewhat wider interpretation to this name. If it be permitted to extend the meaning of the term to cover a variety of processes which lead to identical results, the deduction of the loss of surface heat by comparison of day and night temperatures is directly concerned with this wider “greenhouse effect.” [Very, 1908, p477]

Between them, Poynting and Very are attempting to pin down whether the “greenhouse effect” is a useful metaphor, and how the heat transfer mechanisms of planetary atmospheres actually work. But in so doing, they help establish the name. Wood’s 1909 comment is clearly a reaction to this discussion, but one that fails to understand what is being discussed. It’s eerily reminiscent of any modern discussion of the greenhouse effect: whenever any two scientists discuss the details of how the greenhouse effect works, you can be sure someone will come along sooner or later claiming to debunk the idea by completely misunderstanding it.

In summary, I think it’s fair to credit Poynting as the originator of the term “greenhouse effect”, but with a special mention to Ekholm for both his prior use of the word “greenhouse”, and his much better explanation of the effect. (Unless I missed some others?)

References

Arrhenius, S. (1896). On the Influence of Carbonic Acid in the Air upon the Temperature of the Ground. Philosophical Magazine and Journal of Science, 41(251). doi:10.1080/14786449608620846

Ekholm, N. (1901). On The Variations Of The Climate Of The Geological And Historical Past And Their Causes. Quarterly Journal of the Royal Meteorological Society, 27(117), 1–62. doi:10.1002/qj.49702711702

Fleming, J. R. (1999). Joseph Fourier, the “greenhouse effect”, and the quest for a universal theory of terrestrial temperatures. Endeavour, 23(2), 72–75. doi:10.1016/S0160-9327(99)01210-7

Fourier, J. (1822). Théorie Analytique de la Chaleur (“Analytical Theory of Heat”). Paris: Chez Firmin Didot, Pere et Fils.

Fourier, J. (1827). On the Temperatures of the Terrestrial Sphere and Interplanetary Space. Mémoires de l’Académie Royale Des Sciences, 7, 569–604. (translation by Ray Pierrehumbert)

Poynting, J. H. (1907). On Prof. Lowell’s Method for Evaluating the Surface-temperatures of the Planets; with an Attempt to Represent the Effect of Day and Night on the Temperature of the Earth. Philosophical Magazine, 14(84), 749–760.

Very, F. W. (1908). The Greenhouse Theory and Planetary Temperatures. Philosophical Magazine, 16(93), 462–480.

Wood, R. W. (1909). Note on the Theory of the Greenhouse. Philosophical Magazine, 17, 319–320. Retrieved from http://scienceblogs.com/stoat/2011/01/07/r-w-wood-note-on-the-theory-of/