This week, I presented our poster on Benchmarking and Assessment of Homogenisation Algorithms for the International Surface Temperature Initiative (ISTI) at the WCRP Open Science Conference (click on the poster for a readable version).

This week, I presented our poster on Benchmarking and Assessment of Homogenisation Algorithms for the International Surface Temperature Initiative (ISTI) at the WCRP Open Science Conference (click on the poster for a readable version).

This work is part of the International Surface Temperature Initiative (ISTI) that I blogged about last year. The intent is to create a new open access database for historial surface temperature records at a much higher resolution than has previously been available. In the past, only monthly averages were widely available; daily and sub-daily observations collected by meteorological services around the world are often considered commercially valuable, and hence tend to be hard to obtain. And if you go back far enough, much of the data was never digitized and some is held in deteriorating archives.

The goal of the benchmarking part of the project is to assess the effectiveness of the tools used to remove data errors from the raw temperature records. My interest in this part of the project stems from the work that my student, Susan Sim, did a few years ago on the role of benchmarking to advance research in software engineering. Susan’s PhD thesis described a theory that explains why benchmarking efforts tend to accelerate progress within a research community. The main idea is that creating a benchmark brings the community together to build consensus on what the key research problem is, what sample tasks are appropriate to show progress, and what metrics should be used to measure that progress. The benchmark then embodies this consensus, allowing different research groups to do detailed comparisons of their techniques, and facilitating sharing of approaches that work well.

Of course, it’s not all roses. Developing a benchmark in the first place is hard, and requires participation from across the community; a benchmark put forward by a single research group is unlikely to accepted as unbiased by other groups. This also means that a research community has to be sufficiently mature in terms of their collaborative relationships and consensus on common research problems (in Kuhnian terms, they must be in the normal science phase). Also, note that a benchmark is anchored to a particular stage of the research, as it captures problems that are currently challenging; continued use of a benchmark after a few years can lead to a degeneration of the research, with groups over-fitting to the benchmark, rather than moving on to harder challenges. Hence, it’s important to retire a benchmark every few years and replace it with a new one.

The benchmarks we’re exploring for the ISTI project are intended to evaluate homogenization algorithms. These algorithms detect and remove artifacts in the data that are due to things that have nothing to do with climate – for example when instruments designed to collect short-term weather data don’t give consistent results over the long-term record. The technical term for these is inhomogeneities, but I’ll try to avoid the word, not least because I find it hard to say. I’d like to call them anomalies, but that word is already used in this field to mean differences in temperature due to climate change. Which means that anomalies and inhomogeneities are, in some ways, opposites: anomalies are the long term warming signal that we’re trying to assess, and inhomogeneities represent data noise that we have to get rid of first. I think I’ll just call them bad data.

Bad data arise for a number of reasons, usually isolated to changes at individual recording stations: a change of instruments, an instrument drifting out of calibration, a re-siting, a slow encroachment of urbanization which changes the local micro-climate. Because these problems tend to be localized, they can often be detected by statistical algorithms that compare individual stations with their neighbours. In essence, the algorithms look for step changes and spurious trends in the data such as the following:

These bad data are a serious problem in climate science – for a recent example, see the post yesterday at RealClimate, which discusses how homogenization algorithms might have gotten in the way of understanding the relationship between climate change and the Russian heatwave of 2010. Unhelpfully, they’re also used by deniers to beat up climate scientists, as some people latched onto the idea of blaming warming trends on bad data rather than, say, actual warming. Of course, this ignores two facts: (1) climate scientists already spend a lot of time assessing and removing such bad data and (2) independent analysis has repeatedly shown that the global warming signal is robust with respect to such data problems.

However, such problems in the data still matter for the detailed regional assessments that we’ll need in the near future for identifying vulnerabilities (e.g. to extreme weather), and, as the example at RealClimate shows, for attribution studies for localized weather events and hence for decision-making on local and regional adaptation to climate change.

The challenge is that it’s hard to test how well homogenization algorithms work, because we don’t have access to the truth – the actual temperatures that the observational records should have recorded. The ISTI benchmarking project aims to fill this gap by creating a data set that has been seeded with artificial errors. The approach reminds me of the software engineering technique of bug seeding (aka mutation testing), which deliberately introduce errors into software to assess how good the test suite is at detecting them.

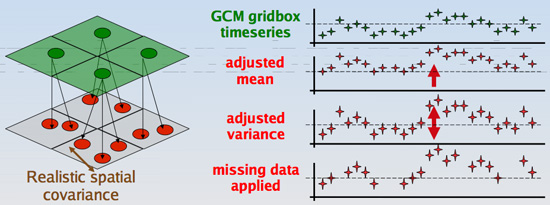

The first challenge is where to get a “clean” temperature record to start with, because the assessment is much easier if the only bad data in the sample are the ones we deliberately seeded. The technique we’re exploring is to start with the output of a Global Climate Model (GCM), which is probably the closest we can get to a globally consistent temperature record. The GCM output is on a regular grid, and may not always match the observational temperature record in terms of means and variances. So to make it as realistic as possible, we have to downscale the gridded data to yield a set of “station records” that match the location of real observational stations, and adjust the means and variances to match the real-world:

Then we inject the errors. Of course, the error profile we use is based on what we currently know about typical kinds of bad data in surface temperature records. It’s always possible there are other types of error in the raw data that we don’t yet know about; that’s one of the reasons for planning to retire the benchmark periodically and replace it with a new one – it allows new findings about error profiles to be incorporated.

Once the benchmark is created, it will be used within the community to assess different homogenization algorithms. Initially, the actual injected error profile will be kept secret, to ensure the assessment is honest. Towards the end of the 3-year benchmarking cycle, we will release the details about the injected errors, to allow different research groups to measure how well they did. Details of the results will then be included in the ISTI dataset for any data products that use the homogenization algorithms, so that users of these data products have more accurate estimates of uncertainty in the temperature record. Such estimates are important, because use of the processed data without a quantification of uncertainty can lead to misleading or incorrect research.

For more details of the project, see the Benchmarking and Assessment Working Group website, and the group blog.

Maybe we should have a competition for a nicer word to replace “inhomogeneities”.

I am sorry to have to say that “Bad data” is definitely not the right word. There are cases where there is a true measurement error and the data is thus bad, but quite often the data is fine, just inhomogeneous.

If you move a climate station, the data is not measured badly at any of the locations. A weather shelter may be changed from one that protects the thermometer better against getting wet (which cools it) due to rain, but is less well ventilated to one that is better ventilated. Ventilation, wetting protection and radiation protection are conflicting requirements for a shelter. Making another compromise is not wrong, just different.

The most tricky problem is a change in the surrounding of the station. For instance a swampy area that over the decades becomes a forest (some distance from the station). Or a heating due to the urban heat island effect. In both cases the measurements are right and useful for someone interested in the local climate (someone interested in the question: how many people die due to too high night temperatures). In case of global warming you are not interested in such local effects and you would like to remove it by homogenization. Thus only for someone interested in global warming the raw data is “bad”, but not for all, it depends on your scientific question.

Climate deniers like to act as if climate science is big business. The truth is, meteorology is much bigger than climate science and the data is mostly gathered for meteorological purposes. For meteorologists, the small inhomogeneities climatologists are interested in, are inconsequential. For them the data is good enough.

Does anyone have an idea for a better word?

@Victor Venema: Point taken. I guess I could still get away with “bad data” if I’m careful to indicate I mean “bad for a specific purpose”, which in this case is analysis of long term climate trends.

One of my students suggested that “inhomogeneities” = “heterogeneities”, which I think is technically correct, but probably not much better for communicating with a lay audience.

A politically correct term for “bad” data could be “climatologically-challenged” data.

The terms for the breaks, which Lucie Vincent suggested, are quite good: “non-climatic shifts” or “non-climatic changes”.