I’m on my way back from a workshop on Computing in the Atmospheric Sciences, in Annecy, France. The opening keynote, by Gerald Meehl of NCAR, gave us a fascinating overview of the CMIP5 model experiments that will form a key part of the upcoming IPCC Fifth Assessment Report. I’ve been meaning to write about the CMIP5 experiments for ages, as the modelling groups were all busy getting their runs started when I visited them last year. As Jerry’s overview was excellent, this gives me the impetus to write up a blog post. The rest of this post is a summary of Jerry’s talk.

Jerry described CMIP5 as “the most ambitious and computer-intensive inter-comparison project ever attempted”, and having seen many of the model labs working hard to get the model runs started last summer, I think that’s an apt description. More than 20 modelling groups around the world are expected to participate, supplying a total estimated dataset of more than 2 petabytes.

It’s interesting to compare CMIP5 to CMIP3, the model intercomparison project for the last IPCC assessment. CMIP3 began in 2003, and was, at that time, itself an unprecedented set of coordinated climate modelling experiments. It involved 16 groups, from 11 countries with 23 models (some groups contributed more than one model). The resulting CMIP3 dataset, hosted at PCMDI, is 31 terabytes, is openly accessible, has been accessed by more than 1200 scientists, has generated hundreds of papers, and use of this data is still ongoing. The ‘iconic’ figures for future projections of climate change in IPCC AR4 are derived from this dataset (see for example, Figure 10.4 which I’ve previously critiqued).

Most of the CMIP3 work was based on the IPCC SRES “what if” scenarios, which offer different views on future economic development and fossil fuel emissions, but none of which include a serious climate mitigation policy.

By 2006, during the planning the next IPCC assessment, it was already clear that a profound paradigm shift was in progress. The idea of climate services had emerged, with a growing demand from industry, government and other group for detailed regional information about the impacts of climate change, and, of course, a growing need to explicitly consider mitigation and adaptation scenarios. And of course the questions are connected: With different mitigation choices, what are the remaining regional climate effects that adaptation will have to deal with?

So, CMIP5 represents a new paradigm for climate change prediction:

- Decadal prediction, with high resolution Atmosphere-Ocean General Circulation Models (AOGCMs), with say, 50km grids, initialized to explore near-time climate change over the next three decades.

- First generation Earth System Models, with include a coupled carbon cycle, and ice sheet models, typically run at intermediate resolution (100-150km grids) to study longer term feedbacks past mid-century, using a new set of scenarios that include both mitigation and non-mitigation emissions profiles.

- Stronger links between communities – e.g. WCRP, IGBP, and the weather prediction community, but most importantly, stronger interaction between the three working groups of the IPCC: WG1 (which looks at the physical science basis), WG2 (which looks at impacts, adaptation and vulnerability), and WG3 (integrated assessment modelling and scenario development). The lack of interaction between WG1 and the others has been a problem in the past, especially as it’s WG2 and WG3 before, as they’re the ones trying to understand the impacts of different policy choices.

The model experiments for CMIP5 are not dictated by IPCC, but selected by climate science community itself. A large set of experiments have been identified, intended to provide a 5-year framework (2008-2013) for climate change modelling. As not all modelling groups will be able to run all the experiments, they have been prioritized into three clusters: A core set that everyone will run, and two tiers of optional experiments. Experiments that are completed by early 2012 will be analyzed in the next IPCC assessment (due for publication in 2013).

The overall design for the set of experiments is broken out into two clusters (near-term, i.e. decadal runs; and long-term, i.e. century and longer), design for different types of model (although for some centres, this really means different configurations of the same model code, if their models can be run at very different resolutions). In both cases, the core experiment set includes runs of both past and future climate. The past runs are used as hindcasts to assess model skill. Here’s the decadal experiments, showing the core set in the middle, and tier 1 around the edge (there’s no tier 2 for these, as there aren’t so many decadal experiments:

These experiments include some very computationally-demanding runs at very high resolution, and include the first generation of global cloud-resolving models. For example, the prescribed SST time-slices experiments include two periods (1979-2008 and 2026-2035) where prescribed sea-surface temperatures taken from lower resolution, fully-coupled model runs will be used as a basis for very high resolution atmosphere-ocean runs. The intent of these experiments is to explore the local/regional effects of climate change, including on hurricanes and extreme weather events.

Here’s the set of experiments for the longer-term cluster, marked up to indicate three different uses: Model evaluation (where the runs can be compared to observations to identify weakness in the models and explore reasons for divergences between the models); climate projections (to show what the models do on four representative scenarios, at least to the year 2100, and, for some runs, out to the year 2300); and understanding, (including thought experiments, such as the Aqua planet with no land mass, and abrupt changes in GHG concentrations):

These experiments include a much wider range of scientific questions than earlier IPCC assessment (which is why there are so many more experiments this time round). Here’s another way of grouping the long-term runs, showing the collaborations with the many different research communities who are participating:

With these experiments, some crucial science questions will be addressed:

- what are the time-evolving changes in regional climate change and extremes over the next few decades?

- what are the size and nature of the carbon cycle and other feedbacks in the climate system, and what will be the resulting magnitude of change for different mitigation scenarios?

The long-term experiments are based on a new set of scenarios that represent a very different approach than was used in the last IPCC assessment. The new scenarios are called Representative Concentration Pathways (RCPs), although as Jerry points out, the name is a little confusing. I’ll write more about the RCPs in my next post, but here’s a brief summary…

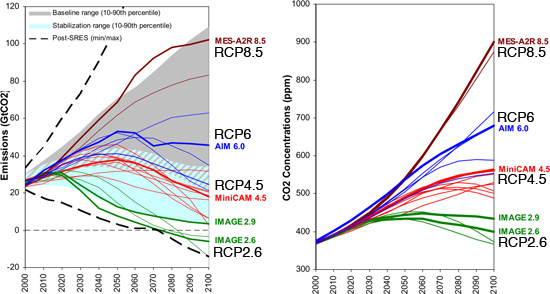

The RCPs were selected after a long a series of discussion with the integrated assessment modelling community. A large set of possible scenarios were whittled down to just four. For the convenience of the climate modelling community, they’re labelled with the expected anomaly in radiative forcing (in W/m²) by the year 2100, to give us the set {RCP2.6, RCP4.5, RCP6, RCP8.5}. For comparison, the current total radiative forcing due to anthropogenic greenhouse gases is about 2W/m². But really, the numbers are just to help remember which RCP is which. Really, the term pathway is the important part – each of the four was chosen as an illustrative example of how greenhouse gas concentrations might change over the rest of the century, under different circumstances. They were generated from integrated assessment models that provide detailed emissions profiles for a wide range of different greenhouse gases and other variables (e.g. aerosols). Here’s what the pathways look like (the darker coloured lines are the chosen representative pathways, the thinner lines show others that were consided, and each cluster is labelled with the model that generated them (click for bigger):

Each RCP was produced by a different model, in part because no single model was capable of providing the detail needed for all four different scenarios, although this means that the RCPs cannot be directly compared, because they include different assumptions. The graph above shows the range of mitigation scenarios considered by the blue shading, and the range of non-mitigation scenarios with gray shading (the two areas overlap a little).

Here’s a rundown on the four scenarios:

- RCP2.6 represents the lower end of possible mitigation strategies, where emissions peak in the next decade or so, and then decline rapidly. This scenario is only possible if the world has gone carbon-negative by the 2070s, presumably by developing wide-scale carbon-capture and storage(CCS) technologies. This might be possible with an energy mix by 2070 of at least 35% renewables, 45% fossil fuels with full CCS (and 20% without), along with use of biomass, tree planting, and perhaps some other air-capture technologies. [My interpretation: this is the most optimistic scenario, in which we manage to do everything short of geo-engineering, and we get started immediately].

- RCP4.5 represents a less aggressive emissions mitigation policy, where emissions peak before mid-century, and then fall, but not to zero. Under this scenario, concentrations stabilize by the end of the century, but won’t start falling, so the extra radiative forcing at the year 2100 is still more than double what it is today, at 4.5W/m². [My interpretation: this is the compromise future in which most countries work hard to reduce emissions, with a fair degree of success, but where CCS turns out not to be viable for massive deployment].

- RCP6 represents the more optimistic of the non-mitigation futures. [My interpretation: this scenario is a world without any coordinated climate policy, but where there is still significant uptake of renewable power, but not enough to offset fossil-fuel driven growth among developing nations].

- RCP8.5 represents the more pessimistic of the non-mitigation futures. For example, by 2070, we would still be getting about 80% of the world’s energy needs from fossil fuels, without CCS, while the remaining 20% come from renewables and/or nuclear. [My interpretation: this is the closest to the “drill, baby, drill” scenario beloved of certain right-wing American politicians].

Jerry showed some early model results for these scenarios from the NCAR model, CCSM4, but I’ll save that for my next post. To summarize:

- 24 modelling groups are expected to participate in CMIP5, and about 10 of these groups have fully coupled earth system models.

- Data is currently in from 10 groups, covering 14 models. Here’s a live summary, currently showing 172TB, which is already more than 5 times all the model data for CMIP3. Jerry put the total expected data at 1-2 petabytes, although in a talk later in the afternoon, Gary Strand from NCAR pegged it at 2.2PB. [Given how much everyone seems to have underestimated the data volumes from the CMIP5 experiments, I wouldn’t be surprised if it’s even bigger. Sitting next to me during Jerry’s talk, Marie-Alice Foujols from IPSL came up with an estimated of 2PB just for all the data collected from the runs done at IPSL, of which she thought something like 20% would be submitted to the CMIP5 archive].

- The model outputs will be accessed via the Earth System Grid, and will include much more extensive documentation than previously. The Metafor project has built a controlled vocabulary for describing models and experiments, and the Curator project has developed web-based tools for ingesting this metatdata.

- There’s a BAMS paper coming out soon describing CMIP5.

- There will be a CMIP5 results session at the WCRP Open science conference next month, another at the AGU meeting in December, and another at a workshop in Hawaii in March.

Pingback: Numbers? You want numbers . . . we’ve got numbers. « Models Methods Software

Pingback: A first glimpse at model results for the next IPCC assessment | Serendipity

Very nice summary, Steve.

A minor production problem for this post–the “click for bigger” link-target for the higher-rez version of your last figure above does not have the RCP tags shown in the low-rez display version of the figure.

The same thing applies to your link to the similar figure in the subsequent post “A first glimpse at model results for the next IPCC assessment” (although there is no low-rez figure displayed there).

Pingback: Climate Modeling: Scientific or Operational? | Serendipity

Pingback: One Model to Rule them All? | Serendipity

Pingback: Some CMIP5 statistics | Serendipity

Can you provide the source for the last figure (CO2 emissions and concentration)? thanks.

It’s from the IPCC report on the development of the RCPs. Page 45.

Pingback: What Does the New IPCC Report Say About Climate Change? | Serendipity