The highlight of the whole conference for me was the Wednesday afternoon session on Methodologies of Climate Model Confirmation and Interpretation, and the poster session the following morning on the same topic, at which we presented Jon’s poster. Here’s my notes from the Wednesday session.

Before I dive in, I will offer a preamble for people unfamiliar with recent advances in climate models (or more specifically, GCMs) and how they are used in climate science. Essentially, these are massive chunks of software that simulate the flow of mass and energy in the atmosphere and oceans (using a small set of physical equations), and then couple these to simulations of biological and chemical processes, as well as human activity. The climate modellers I’ve spoken to are generally very reluctant to have their models used to generate predictions of future climate – the models are built to help improve our understanding of climate processes, rather than to make forecasts for planning purposes. I was rather struck by the attitude of the modellers at the Hadley centre at the meetings I sat in on last summer in the early planning stages for the next IPCC reports – basically, it was “how can we get the requested runs out of the way quickly so that we can get back to doing our science”. Fundamentally, there is a significant gap between the needs of planners and policymakers for detailed climate forecasts (preferably with the uncertainties quantified), and the kinds of science that the climate models support.

Climate models do play a major role in climate science, but sometimes that role is over-emphasized. Hansen lists climate models third in his sources of understanding of climate change, after (1) paleoclimate and (2) observations of changes in the present and recent past. This seems about right – the models help to refine our understanding and ask “what if…” questions, but are certainly only one of many sources of evidence for AGW.

Two trends in climate modeling over the past decade or so are particularly interesting: the push towards higher and higher resolution models (which thrash the hell out of supercomputers), and the use of ensembles:

- Higher resolution models (i.e. resolving the physical processes over a finer grid) offer the potential for more detailed analysis of impacts on particular regions (whereas older models focussed on global averages). The difficulty is that higher resolution requires much more computing power, and the higher resolution doesn’t necessarily lead to better models, as we shall see…

- Ensembles (i.e. many runs of either a single model, or of a collection of different models) allow us to do probabilistic analysis, for example to explore the range of probabilities of future projections. The difficulty, which came up a number of times in this session, is that such probabilities have to be interpreted very carefully, and don’t necessarily mean what they appear to mean.

Much of the concern is over the potential for “big surprises” – the chance that actual changes in the future will lie well outside the confidence intervals of these probabilistic forecasts (to understand why this is likely, you’ll have to read on to the detailed notes). And much of the concern is with the potential for surprises where the models dramatically under-estimate climate change and its impacts. Climate models work well at simulating 20th Century climate. But the more the climate changes in the future, the less certain we can be that the models capture the relevant processes accurately. Which is ironic, really: if the climate wasn’t changing so dramatically, climate models could give very confident predictions of 21st century climate. It’s at the upper end of projected climate changes where the most uncertainty lies, and this is the scary stuff. It worries the heck out of many climatologists.

Much of the question is to do with adequacy for answering particular questions about climate change. Climate models are very detailed hypotheses about climate processes. They don’t reproduce past climate precisely (because of many simplifications). But they do simulate past climate reasonably well, and hence are scientifically useful. It turns out that investigating areas of divergence (either from observations, or from other models) leads to interesting new insights (and potential model improvements).

Okay, with that as an introduction, on to my detailed notes from the session (be warned: it’s a long post). Reto Knutti, from ETH Zürich, kicked off this packed session with a talk entitled “Should we believe model predictions of future climate change?” He immediately suggested a better question is “Why should we trust model predictions?” – for example, can we be confident the uncertainty is sufficiently small? And quite clearly, the answer depends on what kind of prediction we are asking of the models. Here are some more equally important questions:

- What makes a model a good model?

- Do resolution and complexity affect skill and uncertainty?

- Are different models really independent?

- How does model tuning affect validity?

- And what metrics should we use to assess the models?

Much of our confidence in climate models is because the models are based on physical principles (at least partly – more on that in a moment) and they reproduce past temperatures. This is pretty much what the IPCC says, but the story is not that simple.

Some parts of the story are easy. For example, in Knutti et al, 2008, they showed that different models and probablistic methods agree, pretty well. The effect of CO2 on the radiation balance is well understood, and it is consistent with direct observations and detection/attribution studies. (He showed a graph of temperatures by 2100 for the A2 scenario from a range of models, along with their uncertainty ranges, showing they’re pretty consistent).

But for regional predictions, things get more complicated. Differences in the models affect different regions. So, if we need to know the impacts on a particular region, how do we pick a good model? It’s hard to find metrics that link present day climatology with skill at future prediction, and anyway, there’s always the difficult question of how much we’ve used information about current climate already in building the model. Maybe we’re looking at the wrong thing – present day temperature might be the wrong way to validate the model, if present-day observations provide only a weak constraint on future temperature.

What we do know is that the models are steadily improving, and averaging across multiple models gives a better result than any single model. See for example, the analysis by Reichler and Kim 2008:

Extract from figure 1 from Reichler and Kim, 2008. Their caption is "Performance index I² for individual models (circles) and model generations (rows). Best performing models have low I² values and are located toward the left. Circle sizes indicate the length of the 95% confidence intervals. Letters and numbers identify individual models (see supplemental online material); flux-corrected models are labeled in red. Grey circles show the average I² of all models within one model group. Black circles indicate the I² of the multimodel mean taken over one model group.

The figure shows the steady improvement in the ability of models to reproduce current climate through the various Climate Model Inter-comparison Projects (CMIPs), along with a steady reduction in the spread. [Note: CMIPs 1, and 2 were done in the late ’90s. CMIP-3 was heavily used for IPCC AR4. For the next round of the IPCC process, they synchronized the numbers so we have CMIP5 and there was no CMIP4]. The most interesting thing about this analysis is a curious fact that’s been known by climate modellers for years: averaging the outputs of all the models (i.e. the black circles) often gives a much better result than the best individual model. How can this be? It turns out that each model has quirks – regions or phenomena that they don’t work so well on. However, each has different quirks, so a big enough ensemble moderates the ‘weirdnesses’ of any one model.

But what are the limits of this averaging over multiple models? The improvement from averaging flattens off after about 5 models, whereas theoretically you’d expect it to continue improving by 1/sqrt(n) if the models were truly independent. So, this suggests that the number of independent models is much smaller than the actual number of models.

We also know that higher resolution in the models reduces temperature biases, but not precipitation bias. Resolving phenomena at small scales has little impact on the large scales. So pushing for higher and higher resolution (which is a common goal in climate modeling these days) has limited use in removing the remaining errors. And there appears to be no correlation between local (regional) bias and resolution. Hence, pushing for even higher resolution has a huge computational cost, for limited return on error reduction.

So how do we establish confidence in prediction from the model? Reto offered the analogy of sending a rover to Mars. You run lots and lots of tests in simulated environments on Earth, to increase confidence that the rover will perform well on Mars. But you can never know whether you missed something. Model forecasts for future climate are like that.

These observations lead Reto to be critical of the approach he characterized as “Build the most complicated model that can be afforded, run it once on the biggest computer, and struggle to make sense of the results”. Instead, he points out that simpler models are often easier to understand, and can be run many times to get probabilistic forecasts. Conclusions: models have improved, but the uncertainty in predictions is unlikely to decrease quickly. Models are useful tools to understand the climate system, and the best and only tools for prediction. But we should not over-sell them.

The second paper, “Confirmation and Adequacy-for-Purpose in Climate Modeling” was by Wendy Parker, a philosophy prof from Ohio U. However, Wendy wasn’t able to come, so Eric Winsberg read her paper for her.

Testing a model’s adequacy is difficult. The first problem is that ‘testing’, at least in the usual sense of the word, is the wrong concept. Not that testing per se is a bad thing, but we should be clear what we mean by ‘testing’ a climate model. A climate model is a complex hypothesis about the climate system. So a test should check whether the climate system is really as the model says. But this doesn’t make sense for today’s models. All sorts of assumptions and simplifications have been made in building the models, so we know that in many ways the model will not accurately simulate current climate. But they are still adequate for many purposes – for example weather models are adequate for predicting next day high temperatures with great skill, despite many simplifications.

So when we test a model, we’re not really testing whether it’s true, we’re testing whether it is adequate: A hypothesis is confirmed if the model fits well enough. But that means we need to be clear what counts as adequate. Another way to tackle this is to ask ‘if my model is to be considered adequate for some task, what else should it be able to do?’. An obvious thing to do is to try it out on different periods of paleoclimate. But the problem here is that models tend to be tuned to 20th century climate, and so maybe shouldn’t be expected to do so well on paleoclimate.

So what are we to do? Wendy suggests we must focus on the process by which the models are built, and look at each variable. If the model fails to simulate variable A within accuracy X in the 20th century climate, this disconfirms that it will simulate variable A within accuracy X in the 21st century climate. Hence, we can falsify each aspect of a model prediction, but not confirm it. [My comment: I don’t think she meant ‘the process by which models are built’ in the sense that I’m interested in it, which is a pity because I think the engineering process by which the models are built tells us a hell of lot about their validity. More on this in a future post…]

Leo Donner from GFDL spoke next, in a talk entitled “On Clocks and Clouds: Confirming and Interpreting Climate Models as Scientific Hypotheses”. The title is borrowed from Karl Popper’s 1965 essay “Of Clocks and Clouds“, in which Popper distinguished two very different kinds of subject for scientific study:

- Things that are subject to exact measurement (e.g. clocks)

- Things that are subject to statistical approximation (e.g. clouds)

Climate models use bits of both (literally, in the case of clouds, which were first incorporated into climate models twenty years after Popper’s essay). Biological processes are also like clouds, while energy and momentum are the clocks in the models.

The problem is that climate models resolve the world into grid squares that are bigger than clouds, so clouds are parameterized, using a heuristic approach. We do have detailed simulation models of clouds themselves, but a stand-alone cloud resolving model on the scale of 10s of meters competes with global climate models for computational power needs – in other words, detailed cloud models on a global scale are not currently possible.

This then causes problems with the idea of climate models as hypotheses. Formulation from robust principles is not possible because clouds and biological processes are only statistical approximations. For example, this figure from the IPCC AR4 shows how much the models agree/disagree over precipitation changes. The white areas are where fewer than 66% of the models agree over increasing or decreasing precipitation (e.g. rainfall over N. America). Worse still, in some places, 90% of the models agree, and they are all wrong!

One way to probe this is to test the models by varying their tuning parameters across their range, for example as was done by in Stainforth et. al’s paper in Nature, Jan 2005. Leo advocated that every model should be subjected to this kind of analysis! He also echoed the point that despite the steady reduction in errors when tested against 20th century climate, such tests are only falsification tests, not model confirmations. The big worry is that future climate is forced differently than the current climate for which the models are developed. [my reaction: this seems very probable, as the climate changes – the question is how fast will model accuracy tail off once we enter new climate regimes?]

Leo’s conclusion is that our knowledge of different climate processes vary tremendously. Our best strategy is to improve the realism of climate processes in the models. There was a question from the audience on how good the IPCC assessment is. Leo’s response was that they have adopted an expert opinion process (a bit like multiple doctors’ diagnoses), and have done a good job at capturing what we know. [As an aside, someone in the audience commented that Popper was disappointed to discover that falsification was as good as we can do in science – he was hoping to establish a much stronger basis for scientific truth, to counter Marxist theory.]

Another philosopher, Eric Winsberg, from U South Florida, was up next, with “Uncertainty and Risk in the Predictions of Global Climate Models”. Eric is especially interested in Quantitative estimates of Margins and Uncertainty (QMU). He began by reminding us that social value judgements have an inevitable role in science, at the very least for deciding what is important, and what is valuable. There is a traditional division of labour between the epistemic and the normative:

- Normative: Everyone has a say in what is considered to be important.

- Epistemic: Only experts have a say in what to believe about the natural world.

But can this division of labour be preserved, especially in the face of a problem as big as climate change? Eric explained Rudner’s argument about the role of social value judgements in empirical hypothesis testing:

- No hypothesis is ever proved 100%;

- So we decide to accept/reject the hypothesis depending on whether the evidence is sufficiently strong;

- The judgment of what ‘sufficiently strong’ means depends on the importance of the hypothesis

- Hence what we accept as empirical truth depends on a social value judgement.

For example, we need higher standards of evidence for vaccine trials than for stamping machines. So, how sure we need to be about a hypothesis before we act on it depends on value judgements. Jeffreys‘ response to Rudner was that the proper role of the scientist isn’t to accept or reject hypotheses, it is to assign probabilities to them (which can then be value neutral). Hence, society then gets to decide when to act, and the division of labour is preserved.

But then Douglas points out that scientists’ methodological choices do not lie on a continuum. These choices involve a complex mix of value judgement, such as the relative importance of type 1 vs. type 2 errors. The problems that Douglas points out are particularly acute in climate science. The size and complexity of climate models means that over time, the methodological choices get entrenched and become inscrutable. They are buried in the code and hard to recover [especially the older choices – many climate models are based on legacy code that can be decades old].

Eric’s conclusions were not to oversell the precision of probabilistic forecasts, to acknowledge that non-probabilistic forecasts are value-laden, and that we need to consider the role of values more carefully in climate science. If climate models are called on to make more detailed appraisals about local future climates, it will become more and more difficult to separate value judgement and expertise. So, climate science needs to be open and inclusive, but also more self-critical. [In the brief discussion that followed, there seemed to be some sense of frustration with this advice.

Someone asked what happens if climate scientists produce principled probabilistic forecasts, but the public and policymakers don’t understand them? There didn’t seem to be a good answer to this one.]

The next speaker was Lenny Smith, a statistics prof from LSE, in a talk entitled “Quantitative Decision Support Requires Quantitative User Guidance”. Lenny traces problems of how we handle uncertaintly in forecasts all the way back to Fitzroy, who coined the term ‘forecast’ back in 1862. However, Fitzroy was criticized for how he presented uncertainty in his storm forecasts, in part because shipping fleets ended up staying in harbour, even when no storm appeared.

Lenny’s talk argued that we have oversold the insights of climate science, and this now threatens the credibility of the field. As examples of ‘overselling’, he showed:

- the schematic of climate processes from the IPCC AR4 WG1 report (which he called a “dangerously schematic schematic”). Such a picture might be interpreted as to imply all the processes shown are resolved in climate models, whereas in fact, for models (such as HadCM3) the grid squares in the model are too big to capture some of the processes shown. [Personally, I don’t think this is a fair criticism. Such figures are not used when explaining climate models, and our knowledge of these processes comes from many sources, not just models].

- the UKCP 2009 analysis on the UK Met Office’s website, which offers probability density functions (PDF) for the warmest day at a particular postcode [the tool allows you to see detailed regional predictions for the UK in the 2050s, and has been criticized elsewhere for failing to convey any of the underlying assumptions].

The problem with these probabilistic forecasts is that they don’t tell you the probability that if you acted on the forecast, you would get a big surprise, e.g. of a temperature above the 99% line. The chance of this is actually much higher than 1%, because of variables not accounted for in the model. As an example, in the Andes, the models miss some of the mountain ridges, because the rapid changes in height are much smaller than the model grid squares. Downscaling the model outputs therefore misses many important micro-climates for this region, which means that the models give the wrong results for impact on biological processes. For regional predictions here, it would be better to go back to analysis of climate principles such as blocking.

Hence, it is irrational to base decisions on probability density functions from the models. We need to establish the contours outside of which big surprises are likely, and do a better job of communicating the uncertainties.

We also need more transparency on model development. For example, the UKCIP project makes the claim that their predictions are good because they are based on HadCM3, which is (claimed to be) the best in the world. However, this is not sufficient to establish validity, because what matters is fitness for purpose – i.e. is this model suitable for fine-grained predictions of future climate for these regions? Forecasters need to curtail the tendency for ‘plausible deniability’ (i.e. the tendency to use uncertainty factors to cover the event of the forecast being wrong), and instead think about how to manage expectations. For example, to talk about “climate proofing through to 2080” makes no sense – it sets the wrong expectations, because of the high likelihood of big surprises.

The main message from Lenny’s talk is if we fail to communicate clearly the limits of today’s climate models for quantitative decision-making, we risk the credibility of tomorrow’s climate science and science-based policy in general.

Next up was Elizabeth Lloyd, a philosophy of science prof from Indiana University, talking about “Robustness and inferences to causes”. Elizabeth’s main point was that philosophically speaking, robustness of a result does not demonstrate validity. Hence, robust outcomes from collections of models doesn’t mean they are all correct. She pointed to the results of ensemble runs (such as the 20th Century analysis in the IPCC AR4), and quoted from Jeff Kiehl’s 2007 paper that the robustness of all the models in simulating the observed 20th century warming “…is viewed as a reassuring confirmation that models, to first order, capture the behaviour of the physical climate system and lends credence to applying the models to projecting future climate”.

Much of Elizabeth’s talk concentrated on the validity of the argument that “robustness proves the mechanism” (i.e. if the models agree, this proves they have the right cause-and-effect processes). She points out that this is a sound argument only if the models are truly independent, and there is some doubt about this (as was pointed out by Reto earlier in this session).

[However, I couldn’t help thinking that by focussing on this one point about robustness, Elizabeth’s analysis was very shallow. Sure, climate scientists do mention robustness of the ensemble of models in simulating 20th Century climate as evidence of correctness, but only as part of a much larger collection of arguments about validity. For example the Kiehl paper she quoted examines in detail the curious point that although the models all simulate 20th century surface temperatures well, they disagree by a factor of two on climate sensitivity. He explores why this could be so, discovers that the difference is largely to do with a larger uncertainty over the role of aerosols in the models, and points out that under future scenarios, forcings from greenhouse gases will dominate forcings from aerosols by an order of magnitude, which therefore limits the role of this particular source of uncertainty in future projections. In other words, robustness of the model ensemble is not used as an argument about their validity, but rather as a point of departure for comparing the various mechanisms in detail, and exploring how and why the models differ. More importantly, as we discovered in our own study of model development, the mechanisms simulated in each model are probed regularly by a continual process of experimenting with the model, and testing hypotheses about the effect of each change to the model.]

The next speaker was David Stainforth from Oxford and LSE (and co-founder of climateprediction.net), on “Uncertainty Estimation in Regional Climate Change: Extracting Robust Information from Perturbed Physics Ensembles”. David (also) addressed the point that climate models are hugely valuable for helping understanding climate processes, but have some problems when used for prediction. Climate science places huge emphasis on the use of models because they are useful instruments for helping understand the physics. Making detailed climate predictions is extraordinarily difficult, as it is essentially a problem of extrapolation. Deficiencies in the model limit the kinds of extrapolation that are possible. These include inadequacy for some phenomena (e.g. methane clathrates, ice sheet dynamics,…) and model uncertainty as some processes are poorly captured (e.g. ENSO, the diurnal cycle of tropical precipitation,…). The lack of model independence is also a problem for ensemble predictions, as is the lack of good metrics for model quality. For example, we’d like to be able to weight the different models in an ensemble according to their quality, but by any global metric, we might end up ruling out all models as they all have some inaccuracies! An alternative might be do it by region and/or process – i.e. pick the models that are known to be better for the particular processes or regions we are interested in for a particular prediction. But this is a slippery slope because other processes/regions elsewhere in the model might have unknown impacts when we get far enough into the future.

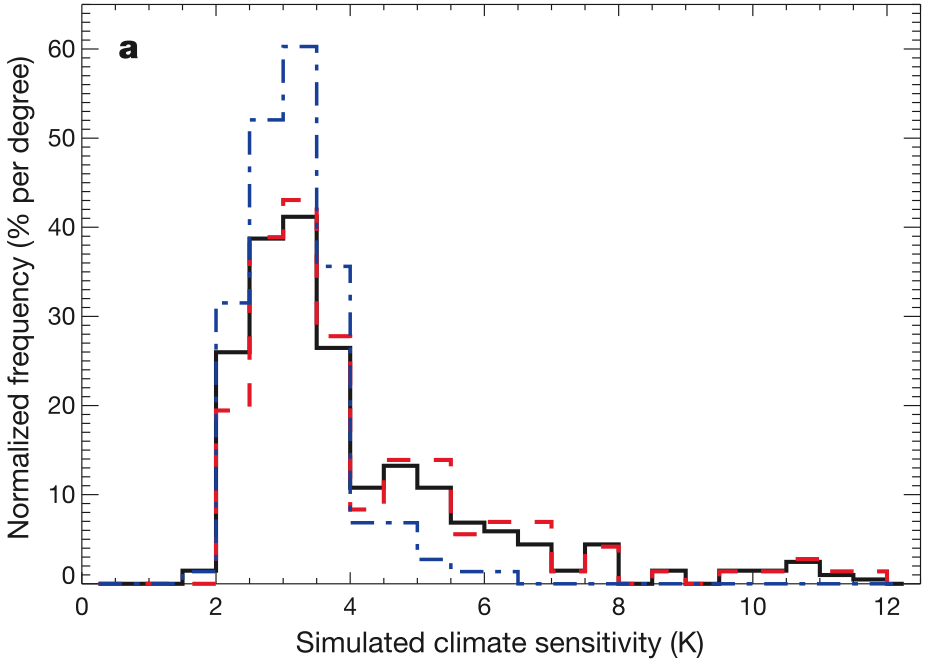

In their classic 2007 paper, David and colleagues catalogued many of the uncertainties in climate models that affect their utility for prediction, and the difficulties of communicating these uncertainties in a way would be meaningful in decision-making. For example, ensembles can be used to generate probability density functions (PDFs) for things like climate sensitivity, such as the one from Stainforth et. al. 2005:

- Fig 2(a) from Stainforth 2005. Original caption: “The frequency distribution of simulated climate sensitivity using all model versions (black), all model versions except those with perturbations to the cloud-to-rain conversion threshold (red), and all model versions except those with perturbations to the entrainment coefficient (blue).”

But the probability density in such analyses doesn’t relate to anything about the real world, because the variability isn’t a sample of any useful space of physical variability. If we want to generate more useful probabilistic analysis, then we have to do more to characterize and reduce the areas of uncertainty in the models. This is where David’s work with ensembles is now focussed.

His final comments were that the focus on reducing uncertainty in regional scale predictions is premature, and we must stick to communicating what we understand, even if this is not necessarily what people want.

The final talk of the session was by Muyin Wang from the University of Washington, on “Examples of Selecting Global Climate Models for Regional Projection”. Muyin picked up on the idea that for different purposes/regions, different models might be better. She presented a technique for selecting models, which she has used effectively for predicting sea ice loss. Rather than choosing models that have the best average performance (i.e. measures based on root mean squared error), she selects those that have got the overall trend right. The best six models according to this metric have a much better agreement and predict a much faster ice loss, which is of course, the trend we’ve seen in the last few years (see Overland and Wang 2007 for details of one such study).

The question is, can this strategy work for regional predictions? For each region, you would likely end up with a different set of ‘top models’, based on local trends for that region, which is what happened in the sea ice study.

And that was it. An incredibly thought-provoking session that probed a number of important issues about the validity of using climate models for prediction. To summarize:

- We can be very confident that the climate is changing due to anthropogenic carbon emissions (observational studies, paleoclimate analysis and climate models all agree on this). But the more it changes, the less confident we are about what happens next.

- The most important function of climate models is to explore ‘what if’ questions, to improve our understanding of climate processes.

- Climate models have steadily improved in their ability to simulate observed climate, and the range of errors across models is decreasing.

- The models do an excellent job of reproducing temperatures, but are less good at other variables, such as precipitation.

- Getting good simulations of 20th Century climate provides a way of falsifying models, but not of proving them correct.

- The more the climate changes in the future, the less we can be sure that current climate models give us good predictions (because of the probability that different kinds of physical processes kick in).

- Probabilistic forecasts are important, but easily misunderstood. In particular, the chances of temperatures exceeding the 99% confidence line are likely to be much higher than 1%!

- Deterministic forecasts (i.e. from a single run) are useless.

- Choice of a good model depends on the purpose – e.g. for regional predictions, the choice depends on both the region and the variables we are interested in (e.g. temperature, seasonal variation, precipitation, etc).

- Climate modellers tend to be very nervous about use of their models for future predictions, but people outside this community often over-sell the ability of the models.

Thanks Steve! A great piece of reporting.

Thanks very much, SE. Just what I’ve been looking for. (Although not at this time of night. I’ll finish reading it tomorrow.)

Lots of good stuff, but one thing someone said that would get very little agreement I think:

“Resolving phenomena at small scales has little impact on the large scales.”

Pretty much known to be untrue … we need to get storm scales right to get blocking right to get large scales right. The only way the quoted sentence would make sense to me is if the large scale of interest was the global mean.

“So pushing for higher and higher resolution (which is a common goal in climate modeling these days) has limited use in removing the remaining errors.”

Again not much agreement out there.

“And there appears to be no correlation between local (regional) bias and resolution.”

I think, we think, that we need to get to a certain scale … this statement *might* be true over a small range of scales.

“Hence, pushing for even higher resolution has a huge computational cost, for limited return on error reduction.”

The issue is that it’s always a trade-off between increasing complexity (adding more processes), resolution (getting important scales resolved), and ensembles (getting statistical value to the predictions). It seems pretty certain that no one route is alone enough …

Bryan: yes I wondered about that, especially the first quote. This was Reto, talking about global temperature and precipitation biases. So, yes, I think he is talking about global mean. He showed some graphs from a new paper currently under submission that show that increased resolution helps a little with local bias, but not enough to hope that feasible increases in resolution will make a big impact on bias.

“The main message from Lenny’s talk is if we fail to communicate clearly the limits of today’s climate models for quantitative decision-making, we risk the credibility of tomorrow’s climate science and science-based policy in general.”

I approve of this message. Was there a quotable quote from his talk that said this?

[Here’s the verbatim quote from his abstract: “It is suggested that failure to clearly communicate the limits of today’s climate model in providing quantitative decision relevant climate information to today’s users of climate information, would risk the credibility of tomorrow’s climate science and science based policy more generally.” (I think he meant ‘models’ plural) – Steve]

Pingback: Initial value vs. boundary value problems | Serendipity

Pingback: Positive Water-Vapor Feedback | Water Clean and Pure

Pingback: Exploiting Spatial Memory: Code Canvas | Serendipity

Pingback: Climate Science is an Experimental Science | Serendipity

This is why in more mature fields there is usually a significant division of labor between the people doing the science (publishing on new methods / techniques / parameterizations) and the people ‘using the models in anger’ (doing forecasts). The numerical weather prediction community is a good example of this. Most engineering fields where models of similar complexity / fidelity are used to support decision making are a good example of this.

You’re right, comparing models as a form of verification (or heaven forbid, as a form of validation) is building castles in the sand.

Pretty quick.

Thanks for your write-up, I think if the current research was presented this way (where uncertainties in predictive capability are honestly addressed) more often, rather than the treatment things get in the popular press, we would be much better off.

Pingback: Leave Science interpretation to Scientists: Media and Popular Opinion do not Invalidate Climate Science « Remove the Walls

Pingback: Tracking down the uncertainties in weather and climate prediction | Serendipity

Pingback: AGU 2010 session on Software Engineering for Climate Modeling | Serendipity

Pingback: I never said that! | Serendipity

Pingback: One Model to Rule them All? | Serendipity

Pingback: What Does the New IPCC Report Say About Climate Change? | Serendipity