I’ve spent some time pondering why so many people seem unable or unwilling to understand the seriousness of climate change. Only half of all Americans understand that warming is happening because of our use of fossil fuels. And clearly many people still believe the science is equivocal. Having spent many hours arguing with denialists, I’ve come to the conclusion that they don’t approach climate change in a scientific way (even those who are trained as scientists), even though they often appear to engage in scientific discourse. Rather than assessing all the evidence and trying to understand the big picture, climate denialists start from their preferred conclusion and work backwards, selecting only the evidence that supports the conclusion.

But why? Why do so many people approach global warming in this manner? Previously I speculated that the Dunning-Kruger effect might explain some of this. This effect occurs when people at the lower end of the ability scale vastly overestimate their own competence. Combine this with the observation that few people really understand the basic system dynamics, for example that concentrations of greenhouse gases in the atmosphere will continue to rise even if emissions are reduced, as long as the level of emissions (burning fossil fuels) exceeds the removal processes (e.g. sequestration by the oceans). The Dunning-Kruger effect suggests that people whose reasoning is based on faulty mental models are unlikely to realise it.

While incorrect mental models and overconfidence might explain some of the problem that people have in accepting the scale and urgency of the problem, it doesn’t really explain the argumentation style of climate denialists, particularly the way in which they latch onto anything that appears to be a weakness or an error in the science, while ignoring the vast majority of the evidence in the published literature.

However, a series of studies by Kahan, Braman and colleagues explain this behaviour very well. In investigating a key question in social epistemology, Kahan and Braman set out to study why strong political disagreements seem to persist in many areas of public policy, even in the face of clear evidence about the efficacy of certain policy choices. These studies reveal a process they term cultural cognition, by which people filter (scientific) evidence according to how well it fits their cultural orientation. The studies explore this phenomenon for contentious issues such as the death penalty, gun control and environmental protection, as well as issues that one might expect would be less contentious, such as immunization and nanotechology. It turns out that not only do people care about how well various public policies cohere with their existing cultural worldviews, but their beliefs about the empirical evidence are also derived from these cultural worldviews.

For example, in a large scale survey, they tested people’s attitudes to the perception of risks from global warming, gun ownership, nanotechnology and immunization. They assessed how well these perceptions correlate with a number of characteristics, including gender, education, income, political affiliation, and so on. While political party affiliation correlates well with attitudes on some of these issues, there was a generally stronger correlation across the board with the two dimensions of cultural values identified by Douglas and Wildavsky: ‘group’ and ‘grid’. The group dimension assesses whether people are more oriented towards individual needs (‘individualist’) or the needs of the group (‘communitarian’); and the grid dimension assesses whether people tend to believe societal roles should be well defined and differentiated (‘hierarchical’) or those who believe in more equality and less rigidity (‘egalitarian’).

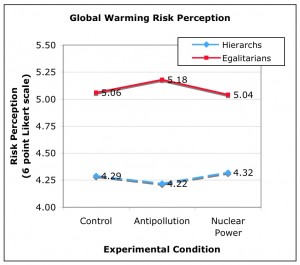

The most interesting part of the study, for me, is a an experiment on how perceptions change depending on how the risk of global warming is presented. About 500 subjects were given one of two different newspaper articles to read, both of which summarized the findings of a scientific report about the threat of climate change. In one version, the scientists were described as calling for anti-pollution regulations, while in the other, they were calling for investment in more nuclear power. Both these were compared with a control group who saw neither version of the report. Here are the results (adapted from Kahan et al, with a couple of corrections supplied by the authors):

In all cases, the mean risk assessment of the subjects correlates with their position on these dimensions: individualists and hierarchs are much less worried about global warming than communitarians and egalitarians. But more interestingly, the two different newspaper articles affect these perceptions in different ways. For the article that described scientists as calling for anti-pollution measures, people had quite opposite reactions: for communitarians and egalitarians, it increased their perception of the risk from global warming, but for individualists and hierarchs, it decreased their perception of the risk. When the same facts about the science are presented in an article that calls for more nuclear power, there is almost no effect. In other words, people assessed the facts in the report about climate change according to how well the policy prescription fits with their existing worldview.

There are some interesting consequences of this phenomenon. For example, Kahan and Braman argue that there is really no war over ideology in the US, just lots of people with well-established cultural worldviews, who simply decide what facts (scientific evidence) to believe based on these views. The culture war is therefore really a war over facts, not ideology.

The studies also suggest that certain political strategies are doomed to failure. For example, a common strategy when trying to resolve contentious political policy issues is to attempt to detach the policy question from political ideologies, and focus on the available evidence about the consequences of the policy. Kahan and Braman’s studies show this won’t work, because different cultural worldviews prevent people from agreeing what the consequences of a particular policy will be (no matter what empirical evidence is available). Instead, they argue that policymakers must find ways of framing policy so that affirm the values of diverse cultural worldviews simultaneously.

As an example, for gun control, they suggest offering a bounty (e.g. a tax rebate) for people who register handguns. Both pro- and anti- gun control groups might view this as beneficial to them, even though they disagree on the nature of the problem. For climate change, the equivalent policy prescriptions include tradeable emissions permits (which appeal to individualists and hierarchists), and more nuclear power (which egalitarians and hierarchists tend to view as less risky when presented as a solution to global warming).

Update: There’s a very good opinion piece by Kahan in the January 21, 2010 issue of Nature.